In a Science Robotics article, PhD candidate Jinda Cui and CSE chair Jeff Trinkle examine current research in learned robot manipulation, offer nine promising areas for future exploration

What if a robot could organize your closet or chop your vegetables? A sous chef in every home could someday be a reality.

Still, while advances in artificial intelligence and machine learning have made better robotics possible, there is still quite a wide gap between what humans and robots can do. Closing that gap will require overcoming a number of obstacles in robot manipulation, or the ability of robots to manipulate environments and adapt to changing stimuli.

PhD candidate Jinda Cui and Jeff Trinkle, professor and chair of the Department of Computer Science and Engineering, are interested in those challenges. They work in an area called learned robot manipulation, in which robots are “trained” through machine learning to manipulate objects and environments like humans do.

“I’ve always felt that for robots to be really useful they have to pick stuff up, they have to be able to manipulate it and put things together and fix things, to help you off the floor and all that,” says Trinkle who has conducted decades of research in robot manipulation and is well known for his pioneering work in simulating multibody systems under contact constraints. “It takes so many technical areas together to look at a problem like that.”

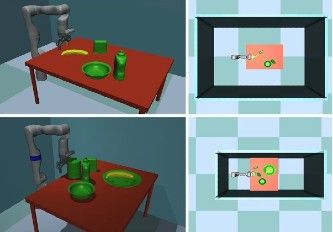

“In robot manipulation, learning is a promising alternative to traditional engineering methods and has demonstrated great success, especially in pick-and-place tasks,” says Cui, whose work has been focused on the intersection of robot manipulation and machine learning. “Although many research questions still need to be answered, learned robot manipulation could potentially bring robot manipulators into our homes and businesses. Maybe we will see robots mopping our tables or organizing closets in the near future.”

In a review article in Science Robotics called “Toward next-generation learned robot manipulation,” Cui and Trinkle summarize, compare, and contrast research in learned robot manipulation through the lens of adaptability and outline promising research directions for the future.

Cui and Trinkle emphasize the usefulness of modularity in learning design and point to the need for appropriate representations for manipulation tasks. They also note that modularity enables customization.

Cui says that those in traditional engineering may doubt the reliability of learned skills for robot manipulation because they are usually "black-box" solutions, which means that researchers may not know when and why a learned skill fails.

“As our paper points out, appropriate modularization of learned manipulation skills may open up ‘black-boxes’ and make them more explainable,” says Cui.

The nine areas that Cui and Trinkle propose as particularly promising for advancing the capacity and adaptability of learned robot manipulation are: 1) Representation learning with more sensing modalities such as tactile, auditory, and temperature signals. 2) Advanced simulators for manipulation so they are able to be as fast and as realistic as possible. 3) Task/skill customization. 4) “Portable” task representations. 5) Informed exploration for manipulation in which active learning methods can find new skills efficiently by exploiting contact information. 6) Continual exploration, or a way for a learned skill to improve continually after robot deployment. 7) Massively distributed/parallel active learning. 8) Hardware innovations that simplify more challenging manipulations such as in-hand dexterous manipulation. 9) Real-time performance since, eventually, learned manipulation skills will be tested in the real world.

Read the full story in the Lehigh University News Center.

Story by Lori Friedman